HackerRank Dataset Creation Methodology

Last updated: June 23, 2025

This document explains how HackerRank designs, builds, and delivers datasets across the SDLC to help customers accelerate software development.

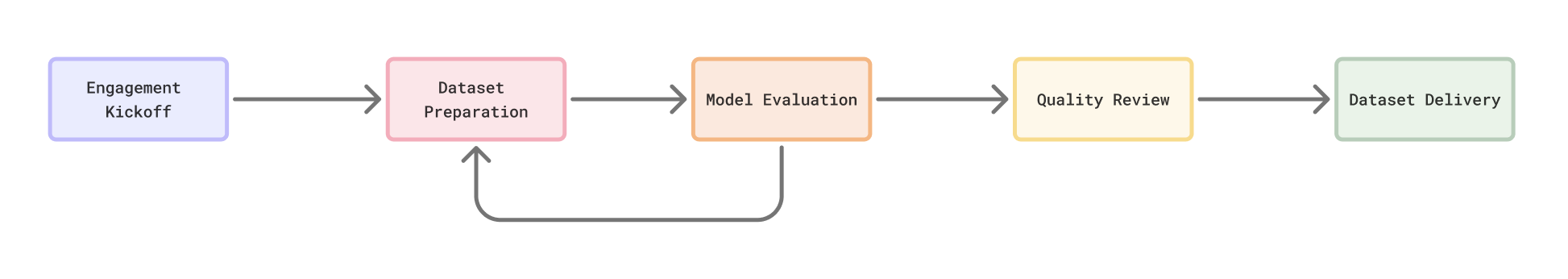

The workflow diagram below illustrates the HackerRank Dataset Creation Methodology.

Engagement kickoff

Each engagement starts with a focus on understanding your business context, technical goals, data requirements, and security constraints. This ensures that HackerRank is fully aligned before any project work starts.

Objective and scope alignment

The first step is to understand the use case and success criteria for your model. This ensures that all deliverables—from individual tasks to evaluation datasets—align with your end goal.

Use case definition: HackerRank’s machine learning (ML) scientists collaborate with your team to define the specific capability you want to improve, such as:

Code generation

Bug detection or bug fixing

Code summarization or explainability

Code refactoring or optimization

Test case generation

Secure coding or linting automation

Model context: Captures technical details such as:

Model architecture (for example, encoder-decoder, decoder-only, instruction-tuned)

Fine-tuning or post-training method (for example, full fine-tuning, LoRA, SFT, RLHF, DPO)

Token budget, latency targets, and context window constraints

Success metrics: Define both quantitative and qualitative goals, such as:

BLEU, CodeBLEU, exact match accuracy or code correctness (unit test pass rate)

Hallucination rate or failure rate reduction

Latency or inference cost per token

By defining key performance indicators (KPIs) and model behavior targets, HackerRank aligns downstream decisions—such as task selection and evaluation format—with your business priorities.

Timeline and milestone planning

The project is organized into distinct phases to support transparent and trackable execution.

The delivery timeline is collaboratively defined and divided into clear milestones:

Dataset structure finalization

Task sampling or pilot review

Evaluation setup and baselining

Final dataset delivery and sign-off

Each phase includes feedback checkpoints to maintain transparency.

Weekly syncs and shared project trackers ensure accountability and visibility.

Security and data residency

HackerRank adheres to industry-standard data privacy and security practices. During project kickoff, HackerRank finalizes your security and compliance requirements.

Data handling: If the engagement involves proprietary source code or model artifacts, the following are supported:

NDA-backed access controls

In-region data processing (for example, EU-only, India-only)

No-retention policies

Secure cloud handoff (for example, Amazon S3 with restricted access keys)

PII redaction: When working with production data (For example, code snippets from tickets or logs), custom pipelines are used for:

Personally identifiable information (PII) redaction (For example, names, emails, tokens, IP addresses)

Sensitive pattern masking (for example, API keys, database credentials)

Tooling isolation: All dataset creation occurs in isolated environments with access logs, version control, and auditability.

Stakeholder onboarding

Stakeholder roles are clearly defined on both sides at the beginning of each engagement.

Your organization:

Technical point of contact (for example, Model Owner, ML Engineer)

Business lead (for example, Product Owner, CTO)

Security or compliance representative

At HackerRank:

Engagement Manager

Subject Matter Expert (SME) Managers

Quality Assurance (QA) Lead

ML Scientists

HackerRank sets up a shared communication channels (Slack or email) and an optional shared drive for real-time updates and status reports.

Dataset preparation

HackerRank’s core strength lies in a global network of highly vetted subject-matter experts (SMEs) and a proven methodology to create production-grade, diverse code datasets. The process integrates domain expertise, structured workflows, and multi-layered quality assurance to ensure that every data point is accurate, explainable, and aligned with your goals.

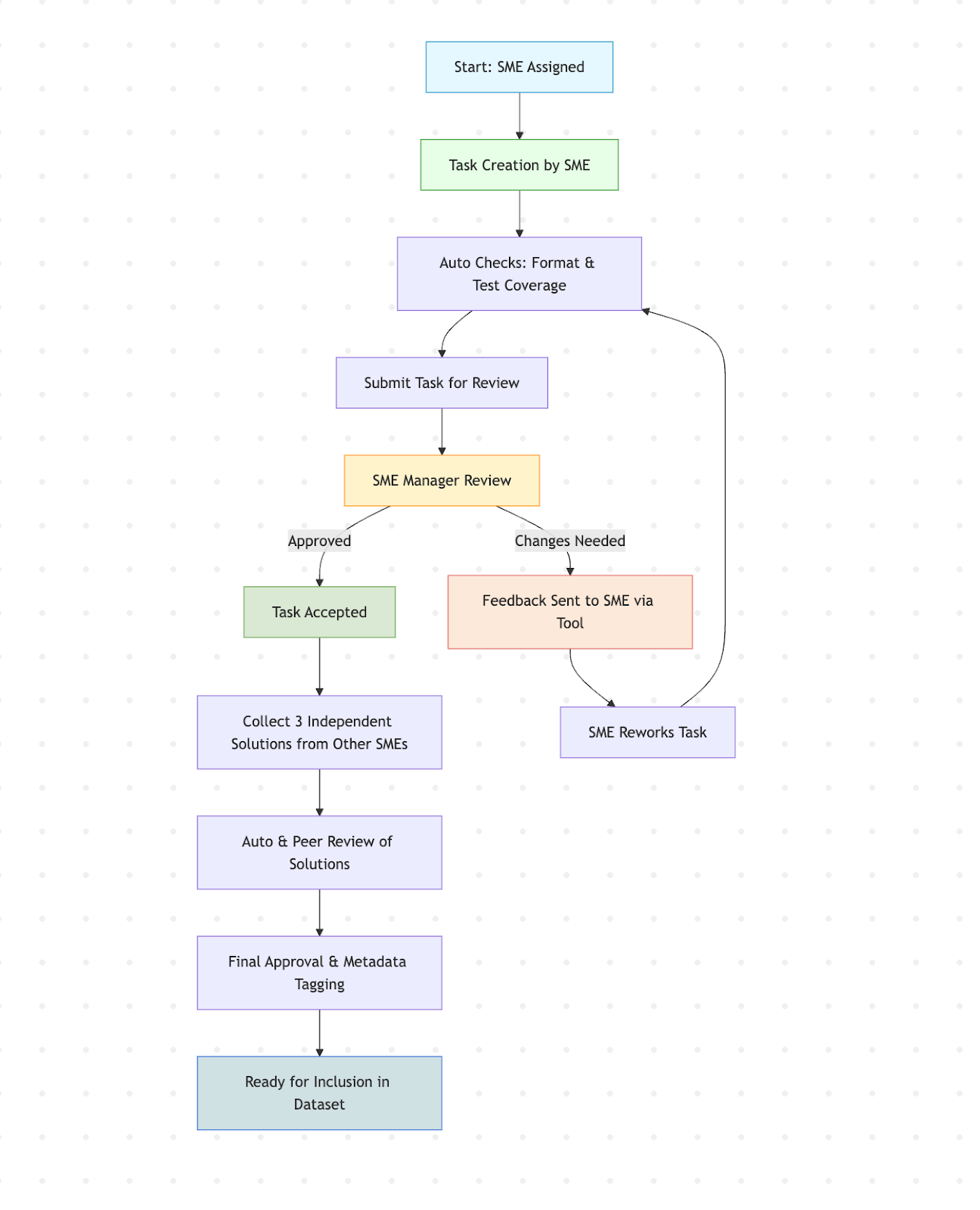

Expert-curated content generation

SME selection: Each dataset engagement begins with the selection of a dedicated panel of SMEs who possess verified expertise in the target languages, frameworks, and domains (for example, backend development, data engineering, DevOps).

Realistic task design: Each SME is responsible for designing original programming tasks that reflect authentic challenges seen in software development, such as implementing a REST API, debugging concurrency bugs, or refactoring legacy code. Tasks are aligned to customer objectives (for example, code generation, bug fixing, summarization) and vary in complexity and format (functions, classes, scripts, projects). The SMEs use internal authoring tools that provide real-time feedback on format compliance, tagging, and completeness. Dashboards that track author performance and dataset coverage are also maintained.

Task authoring guidelines: SMEs are trained with detailed, standardized instructions for task authoring. These include rules for:

Problem framing and clarity

Expected inputs/outputs

Coverage of edge cases

Test case design

Metadata requirements (for example, runtime constraints, expected performance)

Task review and approval: Every task undergoes structured review process led by an SME Manager (a senior content engineer with domain expertise) before being included in the dataset. The review process includes checks for technical correctness, clarity of instruction, real-world relevance, and compliance with authoring standards.

An internal toolset streamlines the review and feedback process to ensure task quality and accountability.Targeted feedback: The SME Manager can provide line-level comments directly within the task.

Revision loop: Rejected tasks are returned to the author with detailed feedback and suggested improvements. The author revises the task and resubmits it for review.

Version control: All edits are tracked through a version-controlled system with audit trails.

Performance tracking: Maintain dashboards that track author perofrmance (For example, time taken for each task creation) and dataset coverage.

Acceptance criteria: A task is approved only when it passes review with no blocking issues. Strict quality checks ensure that:

Only fully approved tasks are included in production datasets

Partially accepted or “good enough” tasks are excluded

This structured review process ensures that each task is polished, unambiguous, and suitable for training or evaluating large language models (LLMs).

Multiple solutions per task: To improve the diversity and robustness of your training data, each task is independently solved by at least three different SMEs. This provides a range of solution styles and allows models to learn from varied reasoning approaches. You can also configure the number of solutions required per task based on your specific needs.

Metadata and context capture

Each task and solution combination is annotated with detailed metadata to support downstream use, including:

Build and run instructions

Language version and dependency list

Time and space complexity analysis (when applicable)

Edge cases and expected failure modes

Performance tuning notes

This metadata ensures reproducibility, easy filtering, and better control over dataset slices (for example, by difficulty level, length, or bug type).

Automated and human QA

A dual-layer QA process is applied to maintain high submission standards:

Sanity checks: All submissions are validated through automated pipelines that execute test cases, perform linting for style and syntax, and verify consistency between each problem and its solution.

Peer review: Submissions are peer reviewed by another SME to ensure correctness, clarity, and compliance with defined standards. Any disagreements trigger structured arbitration before finalization.

Style and documentation checks: In addition to functional correctness, each solution follow idiomatic coding style, include helpful comments, and avoid anti-patterns. These checks are essential for fine-tuning developer assistants and code explanation models.

Model evaluation

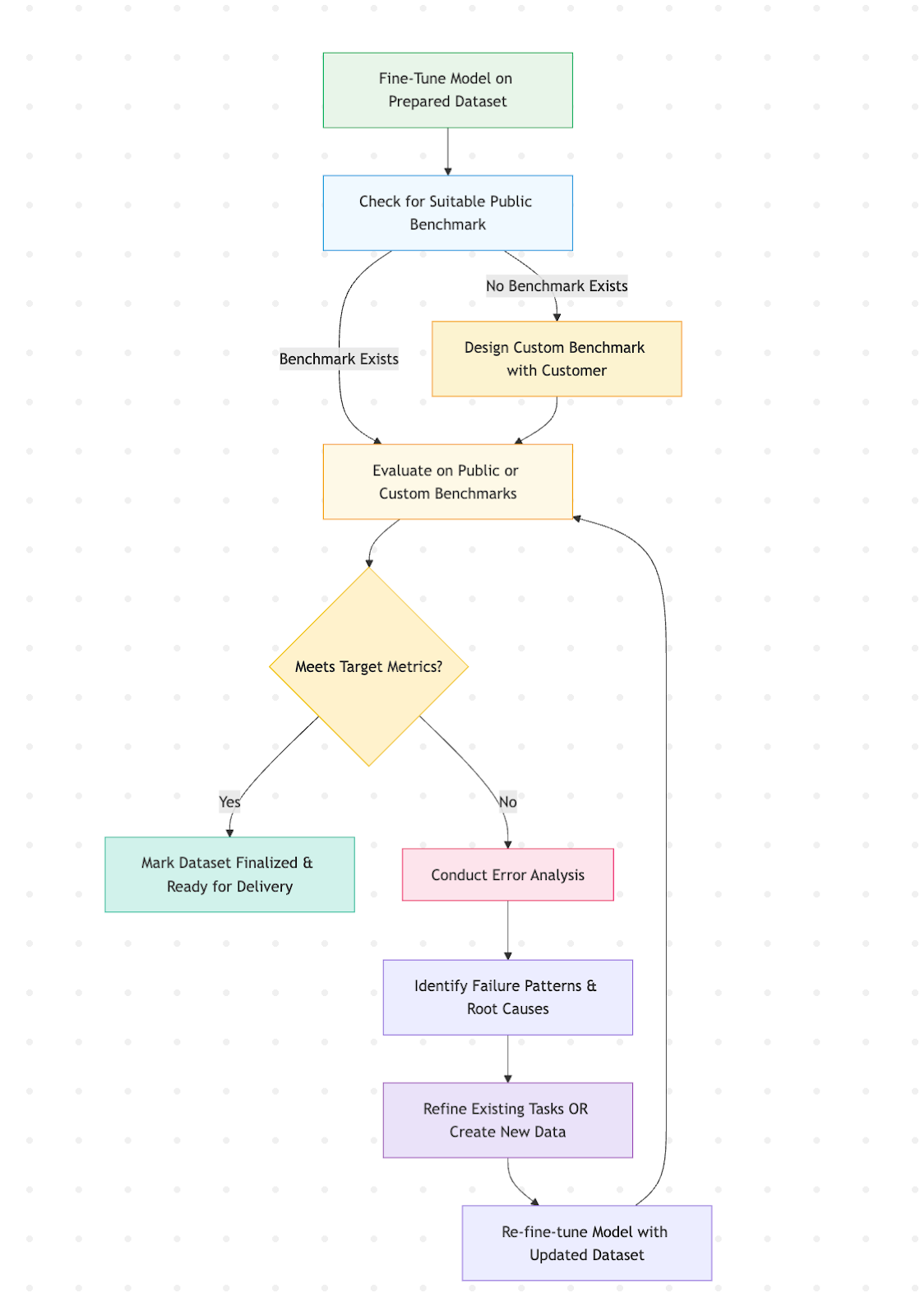

Once the dataset is finalized, it enters a structured evaluation phase to assess how well the model performs after fine-tuning with the new data.

Fine-tuning and initial evaluation

Model integration: The customer’s foundation model or foundation model of your choice is fine-tuned using the curated dataset. This includes:

Full fine-tuning

Instruction tuning

Few-shot prompt construction

Supervised or RLHF-style training, depending on context

Benchmarks: Model performance is evaluated using:

Standard benchmarks such as HumanEval, MBPP, and CodeXGlue

HackerRank’s ASTRA benchmark, customized for your use case

Custom test suites derived from your production use cases

Evaluation metrics aligned with your goals, such as BLEU, exact match accuracy, functional correctness, latency, and hallucination rates

If no suitable public benchmark exists for a task (for example, domain-specific language, refactoring), HackerRank will co-design a custom evaluation suite with the customer to ensure meaningful evaluation.

Evaluation design (if applicable)

When open-source benchmarks do not sufficiently address the use case, a custom evaluation suite is developed. This includes:

Task sets curated from your existing codebase

Manually evaluated gold labels from HackerRank SMEs

Metrics tailored to your business goals, such as readability, security, or test coverage

Edge cases and adversarial examples

Regression-safe subsets for future iterations

Performance feedback loop

If the model fails to meet the predefined success criteria, a structured feedback process is initiated.

Error analysis: Qualitative and quantitative analyses are performed, including:

Clustering of failure cases by problem type

Confusion matrix on labels or outputs

Code correctness breakdown (for example, syntax errors versus logic bugs)

Human review of model outputs when necessary

Diagnosis and attribution: Determine the root cause of performance gaps by evaluating whether they result from:

Insufficient coverage in the dataset

Ambiguous task instructions

Model underfitting or overfitting

Evaluation mismatches

Dataset Iteration: Identified issues are addressed by:

Refining existing tasks (for example, rewriting unclear problems)

Adding new examples to target weak areas

Diversifying solution styles or test scenarios

Continuous improvement loop

The evaluation and tuning process is iterative:

The model is continuously fine-tuned using updated datasets.

New model outputs are re-evaluated against benchmarks and regression-tested against previous versions.

A dataset or model version is approved for deployment only when:

Target primary metrics are achieved

No regressions are found in secondary metrics

The customer signs off

The goal is to close the loop between model behavior and dataset design, ensuring that the data you invest in leads to measurable improvements in real-world performance.

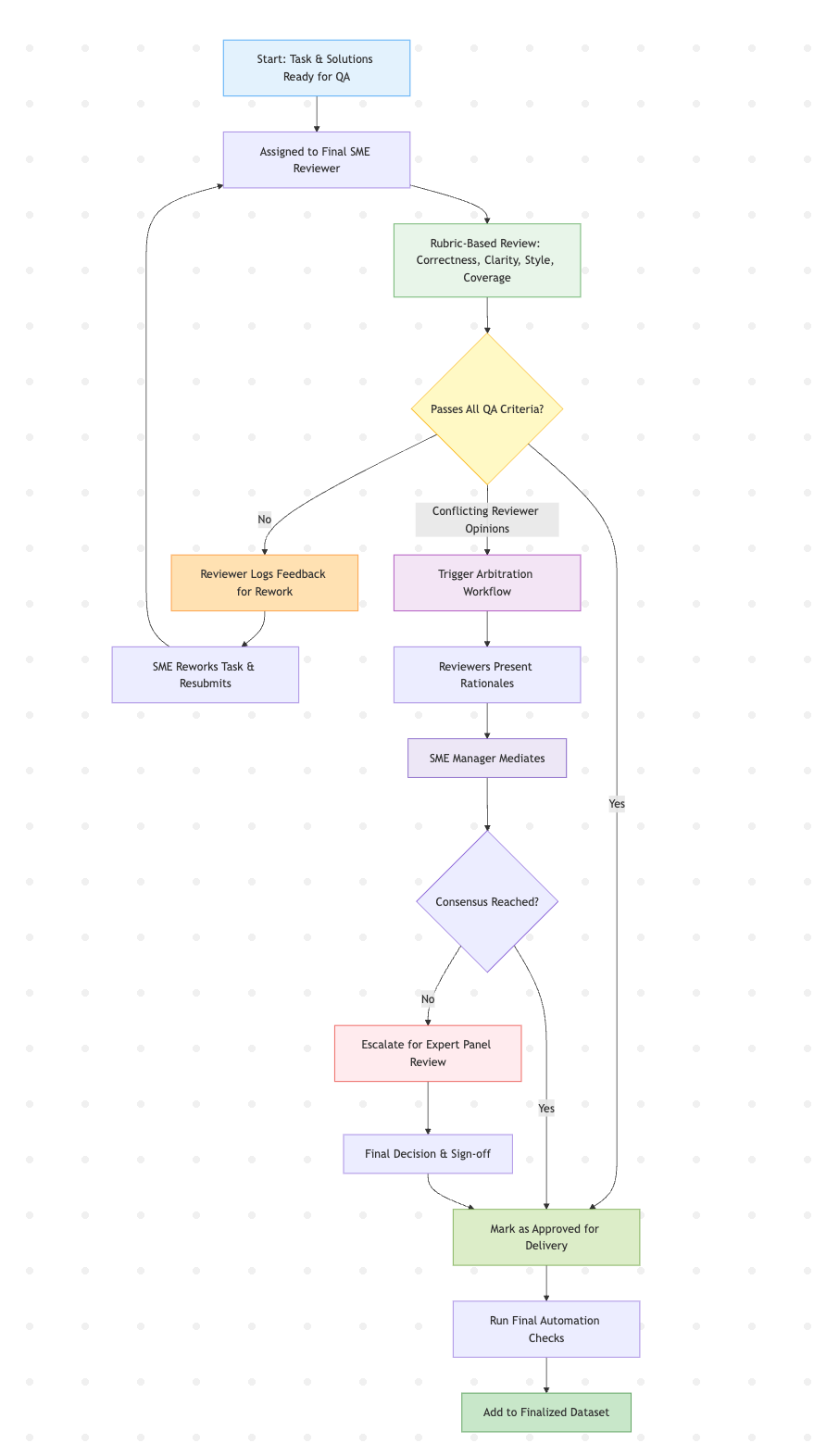

Quality review

Before delivery, each dataset undergoes a structured, multi-stage quality review to ensure that every task and solution meets standards of correctness, clarity, and consistency.

Final human review

Every task and associated solutions undergo a final manual audit by the senior SME reviewers who were not involved in the task creation. This unbiased checkpoint guarantees integrity across the dataset.

The following criteria are verified:

Business logic and technical correctness: Does the task represent a realistic and meaningful programming challenge? Do all solutions correctly address the stated problem?

Code style and formatting: Are the solutions idiomatic, readable, and aligned with language-specific style conventions? Is formatting consistent and instructional?

Clarity and completeness of explanations: If the dataset includes inline comments or external explanations, are they clear and accurate for an LLM to learn from?

Test coverage and execution behavior: Are edge cases tested? Do the test cases validate both correct and incorrect implementations? Are time and space constraints respected?

Rubric-based evaluation

Reviewers use a scoring rubric that breaks down each task into key dimensions (for example, clarity, correctness, completeness and, format) with defined pass/fail criteria and reviewer notes. This provides a consistent and transparent scoring system across reviewers.

Objective scoring ensures alignment with customer expectations.

Reviewer notes are logged per task for traceability and continuous improvement.

Dispute resolution and arbitration

If multiple reviewers disagree:

A structured arbitration workflow is initiated.

Each reviewer presents their assessment and rationale.

The SME Manager mediates to reach consensus.

A task finalized only after all concerns are resolved and the outcome is documented.

This process avoids subjective inconsistency and ensures no task proceeds with open questions.

Version control and audit trail

All task edits, feedback cycles, reviewer comments, and approvals are tracked using internal tools.

Change logs are maintained for each task.

All reviews are timestamped and attributed.

A complete revision history is available on request to show how and why a task evolved.

Tools and automation

While human reviewers lead the process, a final automation pass is also performed to:

Revalidate test cases and runtime behaviour

Detect formatting drift and file inconsistencies

Verify integrity hashes (for example, SHA-256) to support reproducibility.

Dataset delivery

After a dataset passes all quality checks and receives required approvals, HackerRank initiates a secure and verifiable delivery process. The delivery workflow is designed for traceability, reproducibility, and seamless integration with ML pipelines.

Packaging and finalization

Before delivery, all components of the dataset are packaged to ensure it is portable, verifiable, and self-contained.

Signed-off content

All tasks, solutions, and metadata are approved through the QA process.

Includes applicable test files, build scripts, and runtime instructions, where applicable.

Task-to-SME attribution is included if required (anonymized when necessary).

Versioning and integrity

The dataset is packaged as a structured archive (for example, .tar.gz, .zip) with consistent file paths.

Integrity hashes (for example, SHA-256) are included for all files and the entire archive.

Git-based diffs for change tracking between dataset versions.

Documentation bundle

README file with dataset structure and usage instructions.

Data schema or annotation format definitions are included.

Security notes and handling instructions are documented.

Delivery channels

HackerRank supports multiple secure, enterprise-compliant delivery channels:

Encrypted cloud handoff (for example, AWS S3 pre-signed URL, GCS secure bucket, Azure Blob link)

Customer-designated secure FTP or VPN endpoints

All deliveries are logged and traceable.

Customer walkthrough

Upon request, HackerRank provides a walkthrough session with your team to:

Review the dataset structure and evaluation setup

Clarify metadata, labelling conventions, or expected usage patterns

Address integration questions from data scientists or ML engineers

Collect feedback for future dataset iterations

Archiving and retention

Once delivery is confirmed:

HackerRank retains a copy of the dataset and delivery logs for 30 to 90 days (configurable).

After the retention window, the dataset is securely purged unless otherwise agreed.

A formal delivery completion report is issued, including:

Timestamp of delivery

Integrity verification record

Summary of QA coverage